Prototyping Studio

Test Agents Before You Deploy

Validate performance, cost, and behavior across models, prompts, and workflows in a production-mirrored environment, before agents impact real business operations.

Organizations that trust us.

Put Your Agents To The Test

Before agents ever touch production systems, validate how they perform across models, prompts, parameters, and edge cases in a controlled environment designed to mirror production.

Iterate Without Risk

Test and refine agent logic, prompts, tools, and workflows in isolation. Improve behavior without exposing live systems or disrupting active workflows.

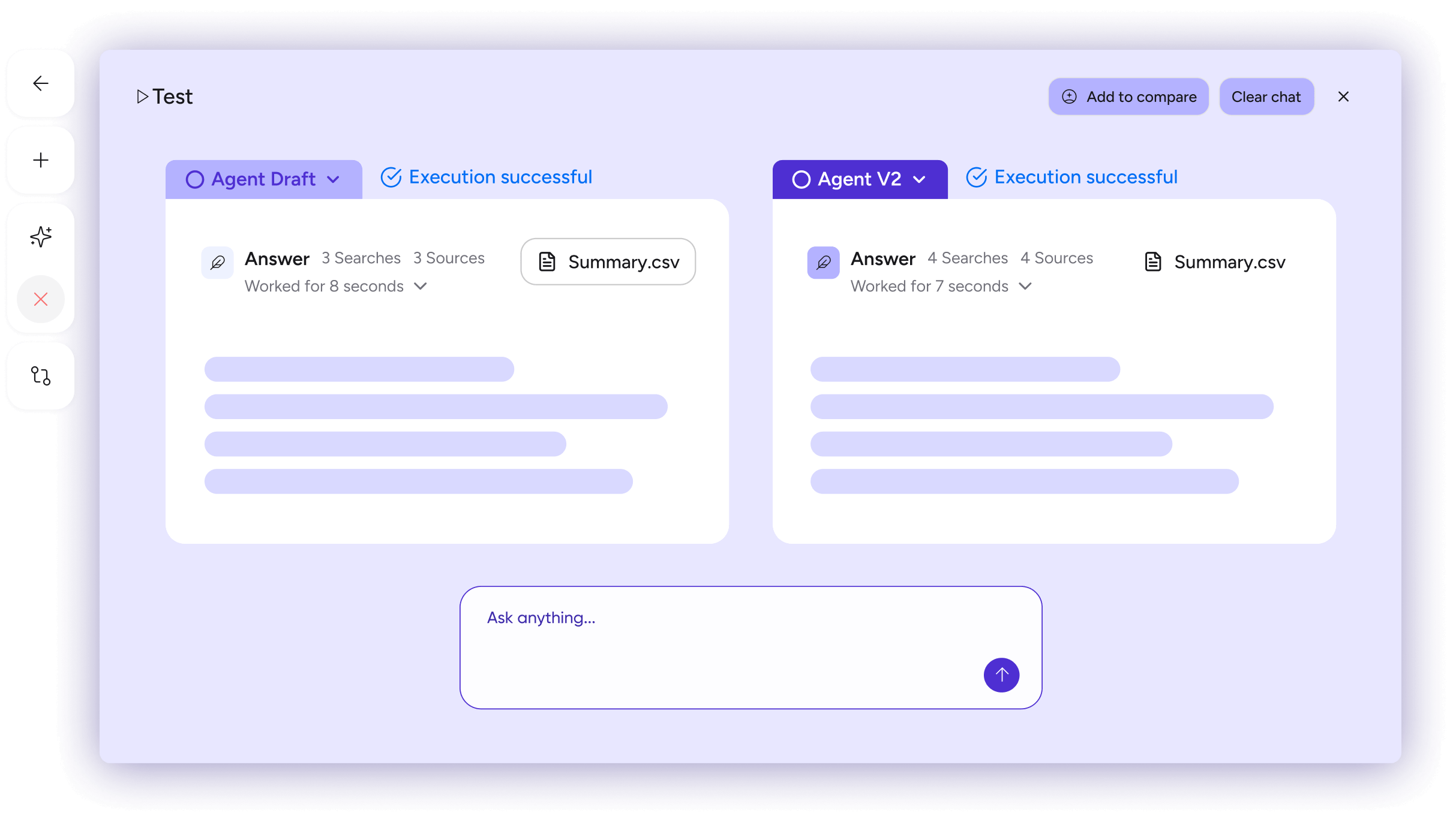

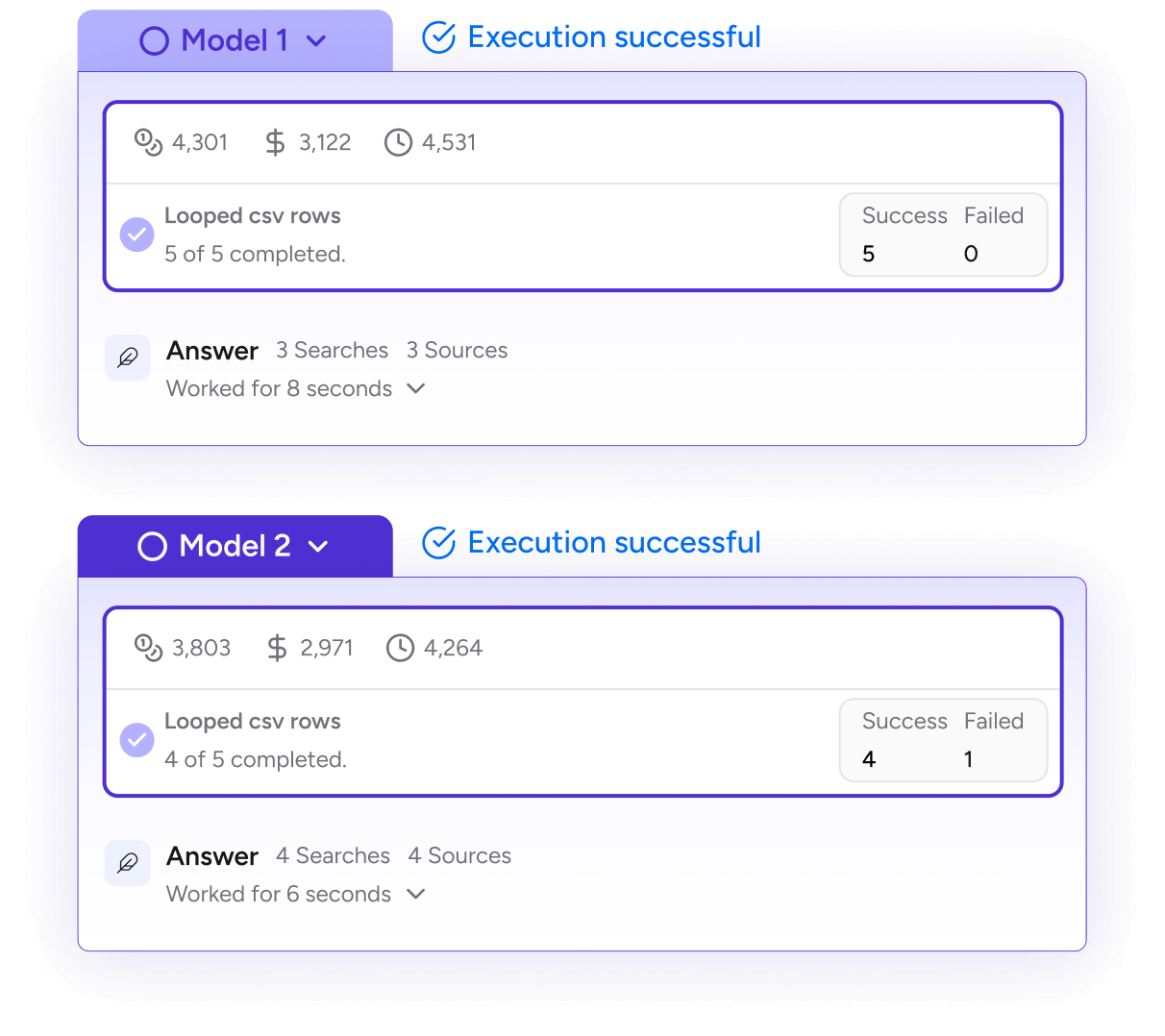

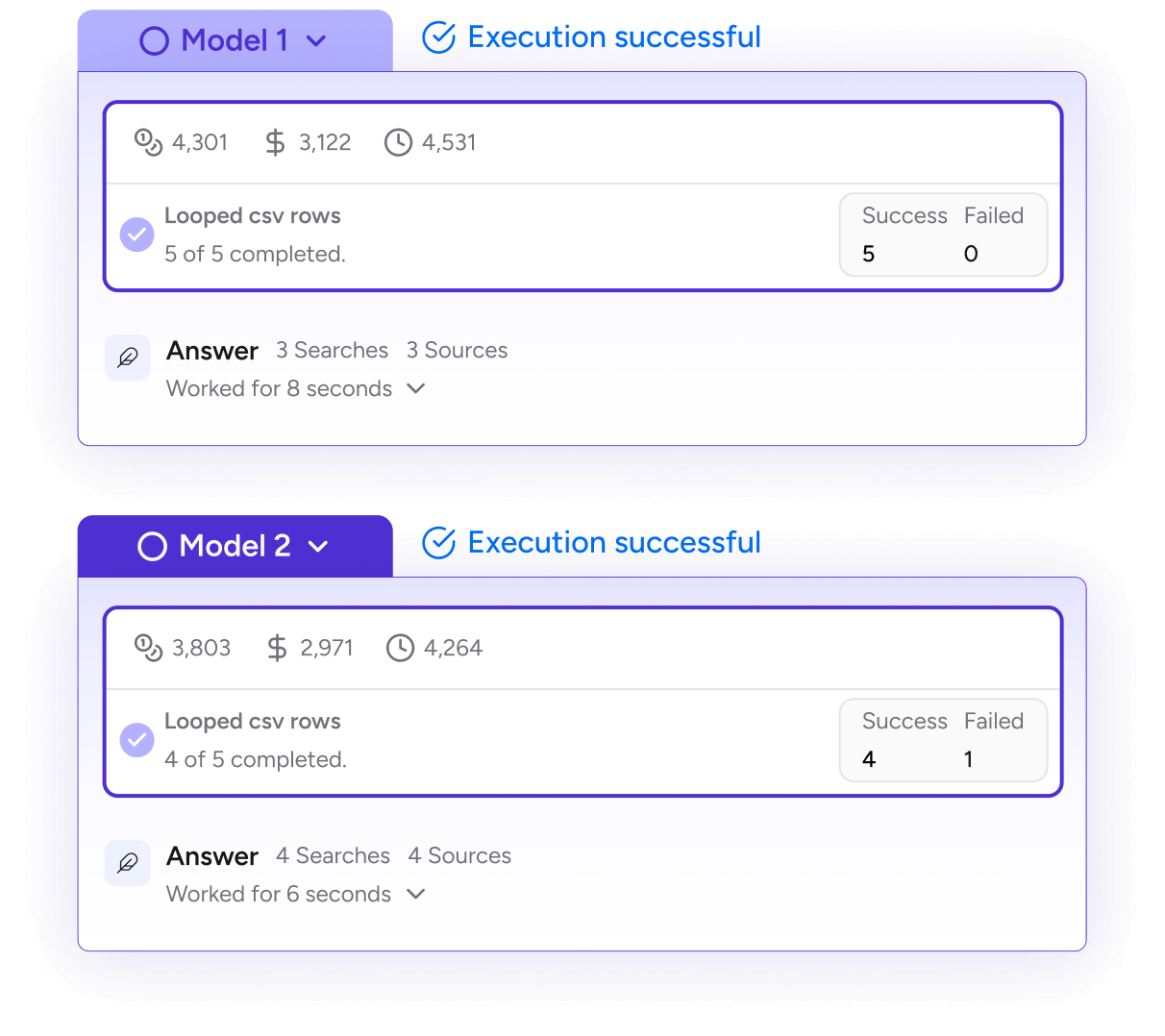

Compare Variations With Data

Run agent configurations side-by-side. Evaluate model choices, prompt structures, and workflow logic to measure impact on accuracy, latency, and output consistency.

Understand Cost Before It Scales

Preview projected token usage, model spend, and infrastructure impact before rollout. Identify tradeoffs early so performance gains don’t create financial surprises.

From Experimentation to

Production Readiness

Airia’s Prototyping Studio provides a controlled environment where teams can test, compare, and optimize AI agents before they scale into live workflows.

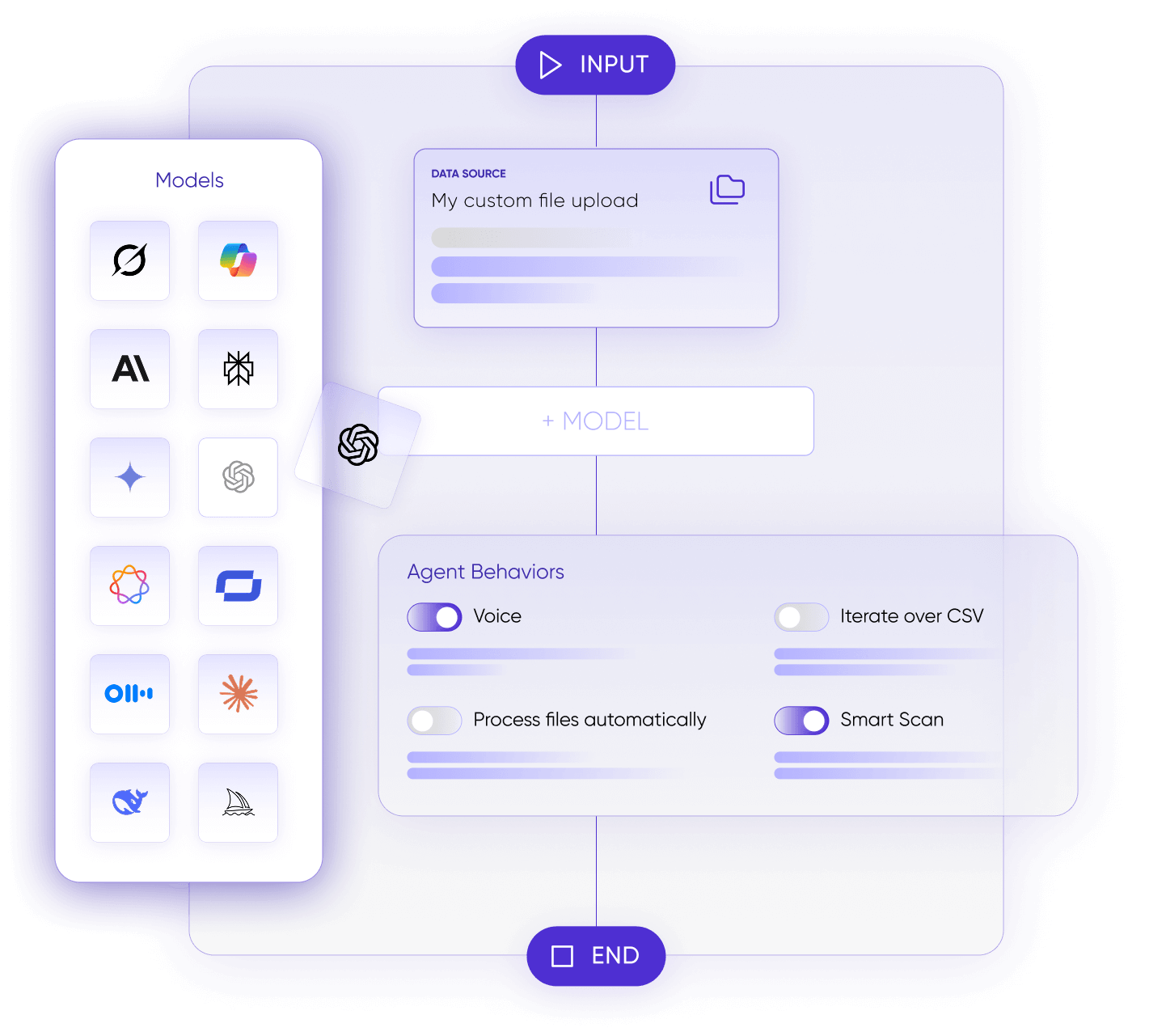

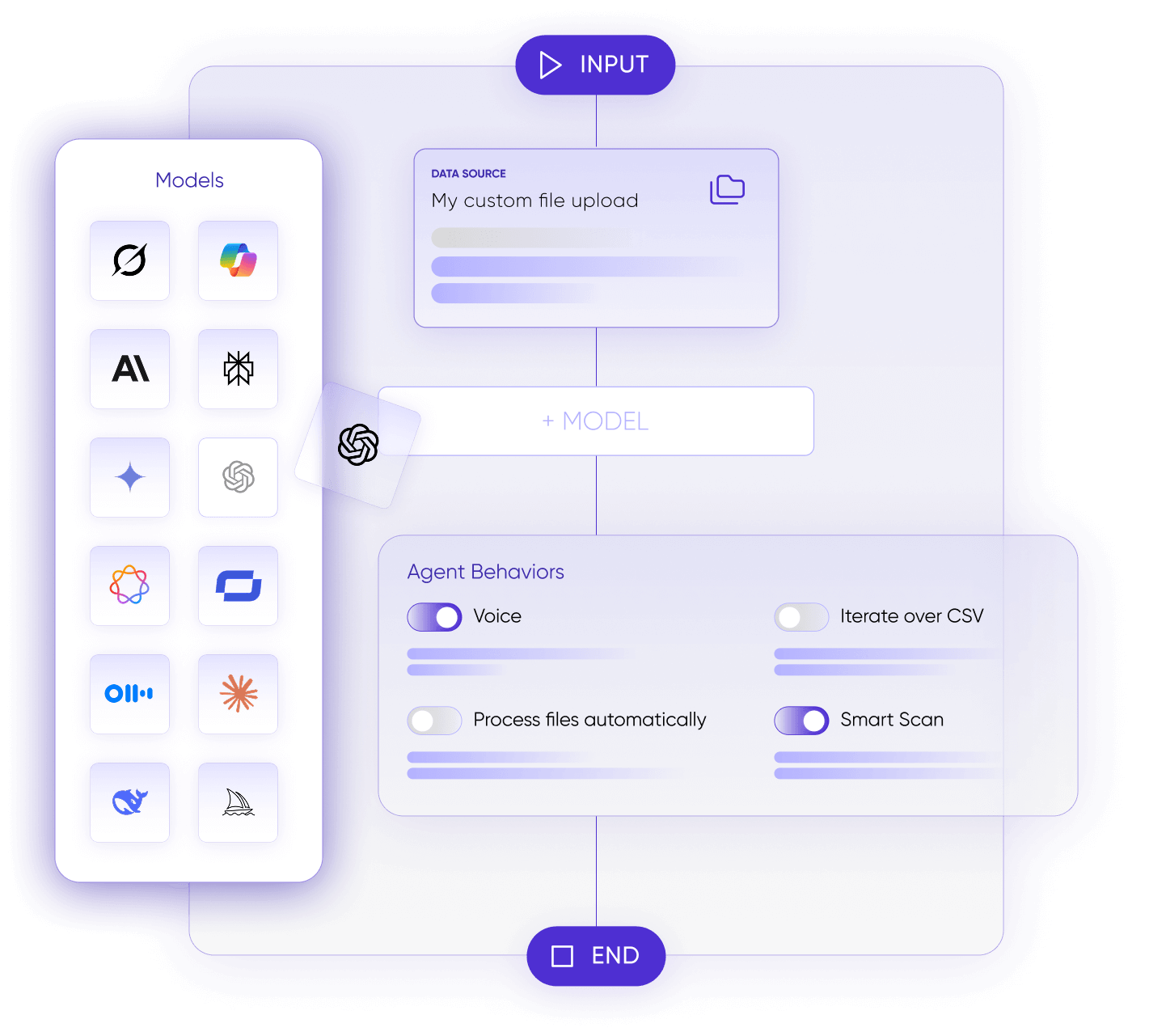

Select the Right Model for Every Task.

Compare how different models perform on the same task to understand tradeoffs in accuracy, latency, and cost. Make deliberate model decisions based on real performance data – not assumptions.

Fine-Tune Prompts with Better Results.

Refine instructions, structure, and parameters to improve accuracy, consistency, and output quality. Test variations methodically to determine what produces the strongest results before deployment.

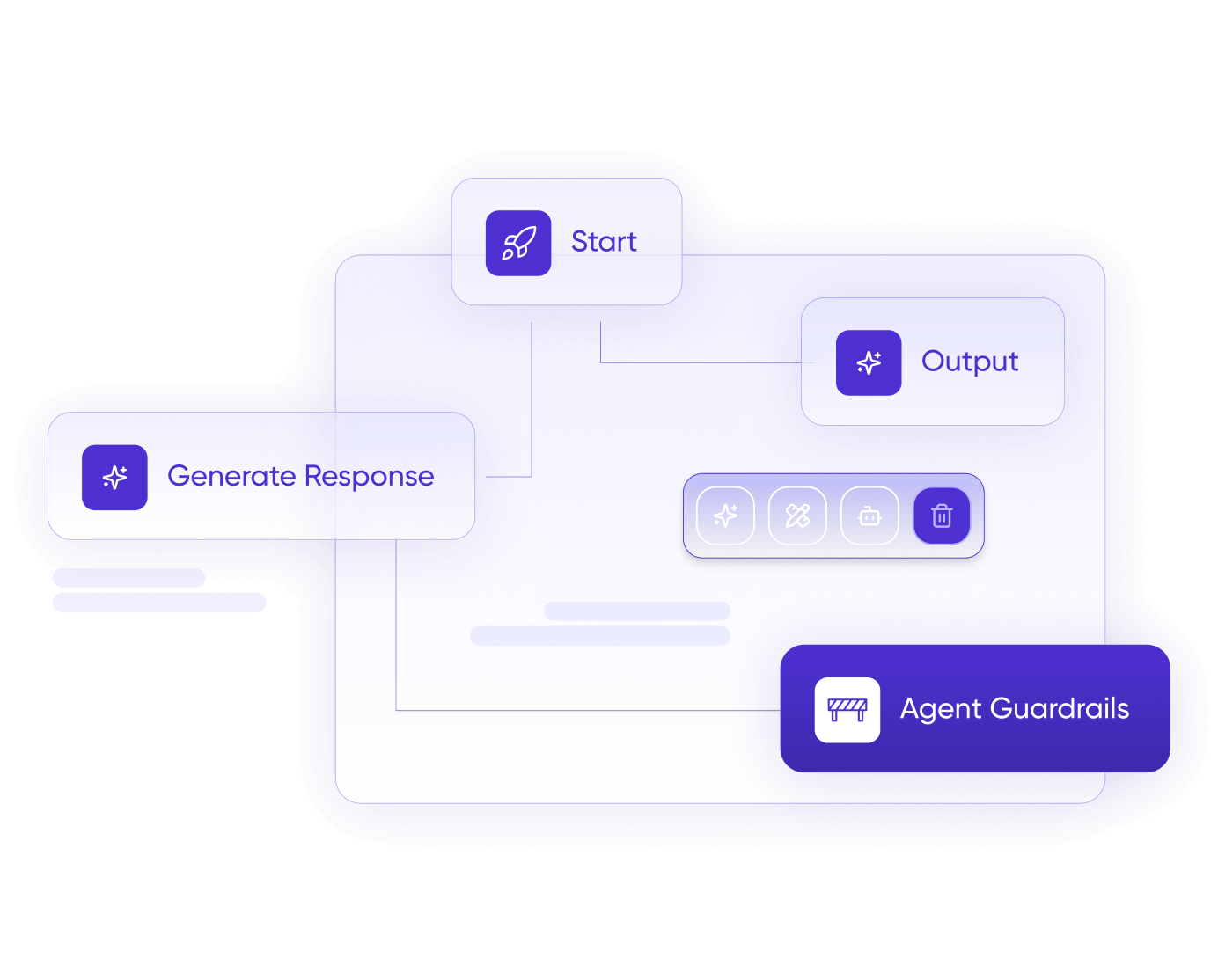

Validate Within Enterprise Guardrails.

Test agents inside a controlled environment that enforces access policies and governance standards. Ensure workflows align with security requirements before they interact with live systems or sensitive data.

Select the Right Model for Every Task.

Compare how different models perform on the same task to understand tradeoffs in accuracy, latency, and cost. Make deliberate model decisions based on real performance data – not assumptions.

Fine-Tune Prompts with Better Results.

Refine instructions, structure, and parameters to improve accuracy, consistency, and output quality. Test variations methodically to determine what produces the strongest results before deployment.

Validate Within Enterprise Guardrails.

Test agents inside a controlled environment that enforces access policies and governance standards. Ensure workflows align with security requirements before they interact with live systems or sensitive data.

Real impact backed by real results.

From smarter workflows to trusted security, Airia drives real results for enterprises.

Airia makes it easy for our go-to-market teams to experiment with building agents for their needs, which means they don’t need to lean on us for deployment. Airia’s security capabilities give me the confidence to let them use AI safely.”